Multiple Machine Learning Algorithms Comparison for Modulation Type Classification Based on Instantaneous Values of the Time Domain Signal and Time Series Statistics Derived from Wavelet Transform

Volume 6, Issue 1, Page No 658–671, 2021

Adv. Sci. Technol. Eng. Syst. J. 6(1), 658–671 (2021);

DOI: 10.25046/aj060172

DOI: 10.25046/aj060172

Keywords: Cognitive radio, Machine learning, Modulation classification

Modulation type classification is a part of waveform estimation required to employ spectrum sharing scenarios like dynamic spectrum access that allow more efficient spectrum utilization. In this work multiple classification features, feature extraction, and classification algorithms for modulation type classification have been studied and compared in terms of classification speed and accuracy to suggest the optimal algorithm for deployment on our target application hardware. The training and validation of the machine learning classifiers have been performed using artificial data. The possibility to use instantaneous values of the time domain signal has shown acceptable performance for the binary classification between BPSK and 2FSK: Both ensemble boosted trees with 30 decision trees learners trained using AdaBoost sampling and fine decision trees have shown optimal performance in terms of both an average classification accuracy (86.3 % and 86.0 %) and classification speed (120 0000 objects per second) for additive white gaussian noise (AWGN) channel with signal-to-noise ratio (SNR) ranging between 1 and 30 dB. However, for the classification between five modulation classes demonstrated average classification accuracy has reached only 78.1 % in validation. Statistical features: Mean, Standard Deviation, Kurtosis, Skewness, Median Absolute Deviation, Root-Mean-Square level, Zero Crossing Rate, Interquartile Range and 75th Percentile derived from the wavelet transform of the received signal observed during 100 and 500 microseconds were studied using fractional factorial design to determine the features with the highest effect on the response variables: classification accuracy and speed for the additive white gaussian noise and Rician line of sight multipath channel. The highest classification speed of 170 000 objects/second and 100 % classification accuracy has been demonstrated by fine decision trees using as an input Kurtosis derived from the wavelet coefficients derived from signal observed during 100 microseconds for AWGN channel. For the line of sight fading Rician channel with AWGN demonstrated classification speed is slower 130 000 objects/s.

- I. Valieva, M. Björkman, J. Åkerberg, M. Ekström, I. Voitenko, “Multiple Machine Learning Algorithms Comparison for Modulation Type Classification for Efficient Cognitive Radio,” in MILCOM 2019 – 2019 IEEE Military Communications Conference (MILCOM), 318–323, 2019, doi:10.1109/MILCOM47813.2019.9020735.

- D. Rosker, D. M., “DARPA Spectrum Collaboration Challenge (SC2).,” DARPA Spectrum Collaboration Challenge (SC2), 2020.

- C. Xin, Spectrum Sharing for Wireless Communications, 2015.

- O. Fink, Q. Wang, M. Svensén, P. Dersin, W.-J. Lee, M. Ducoffe, “Potential, challenges and future directions for deep learning in prognostics and health management applications,” Engineering Applications of Artificial Intelligence, 92, 103678, 2020, doi:https://doi.org/10.1016/j.engappai.2020.103678.

- S. Peng, H. Jiang, H. Wang, H. Alwageed, Y.-D. Yao, “Modulation classification using convolutional Neural Network based deep learning model,” 1–5, 2017, doi:10.1109/WOCC.2017.7929000.

- kaiyuan jiang, J. Zhang, H. Wu, A. Wang, Y. Iwahori, “A Novel Digital Modulation Recognition Algorithm Based on Deep Convolutional Neural Network,” Applied Sciences, 2020, doi:10.3390/app10031166.

- Mathworks., Modulation Classification with Deep Learning., 2020.

- M. Véstias, R. Duarte, J. Sousa, H. Neto, “Fast Convolutional Neural Networks in Low Density FPGAs Using Zero-Skipping and Weight Pruning,” Electronics, 8, 2019, doi:10.3390/electronics8111321.

- Z. Zhu, Automatic Modulation Classification: Principles, Algorithms and Applications, John Wiley & Sons, Incorporated, 2015.

- A. Kubankova, H. Atassi, A. Abilov, “Selection of optimal features for digital modulation recognition,” 2011.

- W. Gardner, Cyclostationarity in Communications and Signal Processing, IEEE Press, New York, 1994.

- W.A. Gardner, C.M. Spooner, “Cyclic spectral analysis for signal detection and modulation recognition,” in MILCOM 88, 21st Century Military Communications – What’s Possible?’. Conference record. Military Communications Conference, 419–424 vol.2, 1988, doi:10.1109/MILCOM.1988.13425.

- C. Cormio, K.R. Chowdhury, “A survey on MAC protocols for cognitive radio networks,” Ad Hoc Networks, 7(7), 1315–1329, 2009, doi:https://doi.org/10.1016/j.adhoc.2009.01.002.

- M. Raina, G. Aujla, “An Overview of Spectrum Sensing and its Techniques,” IOSR Journal of Computer Engineering, 16, 64–73, 2014, doi:10.9790/0661-16316473.

- K. Hinkelmann, Design and analysis of experiments., John Wiley & Sons, Inc., 2011.

- C. Rajan, Statistics for Scientists and Engineers, John Wiley & Sons, Incorporated, 2015.

- Mathworks, Mean or median absolute deviation, 2020.

- Mathworks, Root-mean-square level, 2020.

- Mathworks, Zero Crossing Rate, Nov. 2020.

- Mathworks, Interquartile range, 2020.

- Mathworks, 75th Percentile, 2020.

- Mathworks, Fading Channels, 2020.

- Mya Soe Soe Moe, Win Mar Oo, "Hybrid Feature Selection for Anomaly Detection in IoT Network Intrusion Detection Systems", Advances in Science, Technology and Engineering Systems Journal, vol. 11, no. 2, pp. 17–29, 2026. doi: 10.25046/aj110203

- Vikas Thammanna Gowda, Landis Humphrey, Aiden Kadoch, YinBo Chen, Olivia Roberts, "Multi Attribute Stratified Sampling: An Automated Framework for Privacy-Preserving Healthcare Data Publishing with Multiple Sensitive Attributes", Advances in Science, Technology and Engineering Systems Journal, vol. 11, no. 1, pp. 51–68, 2026. doi: 10.25046/aj110106

- David Degbor, Haiping Xu, Pratiksha Singh, Shannon Gibbs, Donghui Yan, "StradNet: Automated Structural Adaptation for Efficient Deep Neural Network Design", Advances in Science, Technology and Engineering Systems Journal, vol. 10, no. 6, pp. 29–41, 2025. doi: 10.25046/aj100603

- Glender Brás, Samara Leal, Breno Sousa, Gabriel Paes, Cleberson Junior, João Souza, Rafael Assis, Tamires Marques, Thiago Teles Calazans Silva, "Machine Learning Methods for University Student Performance Prediction in Basic Skills based on Psychometric Profile", Advances in Science, Technology and Engineering Systems Journal, vol. 10, no. 4, pp. 1–13, 2025. doi: 10.25046/aj100401

- khawla Alhasan, "Predictive Analytics in Marketing: Evaluating its Effectiveness in Driving Customer Engagement", Advances in Science, Technology and Engineering Systems Journal, vol. 10, no. 3, pp. 45–51, 2025. doi: 10.25046/aj100306

- Khalifa Sylla, Birahim Babou, Mama Amar, Samuel Ouya, "Impact of Integrating Chatbots into Digital Universities Platforms on the Interactions between the Learner and the Educational Content", Advances in Science, Technology and Engineering Systems Journal, vol. 10, no. 1, pp. 13–19, 2025. doi: 10.25046/aj100103

- Ahmet Emin Ünal, Halit Boyar, Burcu Kuleli Pak, Vehbi Çağrı Güngör, "Utilizing 3D models for the Prediction of Work Man-Hour in Complex Industrial Products using Machine Learning", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 6, pp. 01–11, 2024. doi: 10.25046/aj090601

- Haruki Murakami, Takuma Miwa, Kosuke Shima, Takanobu Otsuka, "Proposal and Implementation of Seawater Temperature Prediction Model using Transfer Learning Considering Water Depth Differences", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 4, pp. 01–06, 2024. doi: 10.25046/aj090401

- Brandon Wetzel, Haiping Xu, "Deploying Trusted and Immutable Predictive Models on a Public Blockchain Network", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 3, pp. 72–83, 2024. doi: 10.25046/aj090307

- Anirudh Mazumder, Kapil Panda, "Leveraging Machine Learning for a Comprehensive Assessment of PFAS Nephrotoxicity", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 3, pp. 62–71, 2024. doi: 10.25046/aj090306

- Taichi Ito, Ken’ichi Minamino, Shintaro Umeki, "Visualization of the Effect of Additional Fertilization on Paddy Rice by Time-Series Analysis of Vegetation Indices using UAV and Minimizing the Number of Monitoring Days for its Workload Reduction", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 3, pp. 29–40, 2024. doi: 10.25046/aj090303

- Henry Toal, Michelle Wilber, Getu Hailu, Arghya Kusum Das, "Evaluation of Various Deep Learning Models for Short-Term Solar Forecasting in the Arctic using a Distributed Sensor Network", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 3, pp. 12–28, 2024. doi: 10.25046/aj090302

- Tinofirei Museba, Koenraad Vanhoof, "An Adaptive Heterogeneous Ensemble Learning Model for Credit Card Fraud Detection", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 3, pp. 01–11, 2024. doi: 10.25046/aj090301

- Toya Acharya, Annamalai Annamalai, Mohamed F Chouikha, "Optimizing the Performance of Network Anomaly Detection Using Bidirectional Long Short-Term Memory (Bi-LSTM) and Over-sampling for Imbalance Network Traffic Data", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 6, pp. 144–154, 2023. doi: 10.25046/aj080614

- Renhe Chi, "Comparative Study of J48 Decision Tree and CART Algorithm for Liver Cancer Symptom Analysis Using Data from Carnegie Mellon University", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 6, pp. 57–64, 2023. doi: 10.25046/aj080607

- Ng Kah Kit, Hafeez Ullah Amin, Kher Hui Ng, Jessica Price, Ahmad Rauf Subhani, "EEG Feature Extraction based on Fast Fourier Transform and Wavelet Analysis for Classification of Mental Stress Levels using Machine Learning", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 6, pp. 46–56, 2023. doi: 10.25046/aj080606

- Kitipoth Wasayangkool, Kanabadee Srisomboon, Chatree Mahatthanajatuphat, Wilaiporn Lee, "Accuracy Improvement-Based Wireless Sensor Estimation Technique with Machine Learning Algorithms for Volume Estimation on the Sealed Box", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 3, pp. 108–117, 2023. doi: 10.25046/aj080313

- Chaiyaporn Khemapatapan, Thammanoon Thepsena, "Forecasting the Weather behind Pa Sak Jolasid Dam using Quantum Machine Learning", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 3, pp. 54–62, 2023. doi: 10.25046/aj080307

- Der-Jiun Pang, "Hybrid Machine Learning Model Performance in IT Project Cost and Duration Prediction", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 2, pp. 108–115, 2023. doi: 10.25046/aj080212

- Paulo Gustavo Quinan, Issa Traoré, Isaac Woungang, Ujwal Reddy Gondhi, Chenyang Nie, "Hybrid Intrusion Detection Using the AEN Graph Model", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 2, pp. 44–63, 2023. doi: 10.25046/aj080206

- Ossama Embarak, "Multi-Layered Machine Learning Model For Mining Learners Academic Performance", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 850–861, 2021. doi: 10.25046/aj060194

- Roy D Gregori Ayon, Md. Sanaullah Rabbi, Umme Habiba, Maoyejatun Hasana, "Bangla Speech Emotion Detection using Machine Learning Ensemble Methods", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 6, pp. 70–76, 2022. doi: 10.25046/aj070608

- Deeptaanshu Kumar, Ajmal Thanikkal, Prithvi Krishnamurthy, Xinlei Chen, Pei Zhang, "Analysis of Different Supervised Machine Learning Methods for Accelerometer-Based Alcohol Consumption Detection from Physical Activity", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 4, pp. 147–154, 2022. doi: 10.25046/aj070419

- Zhumakhan Nazir, Temirlan Zarymkanov, Jurn-Guy Park, "A Machine Learning Model Selection Considering Tradeoffs between Accuracy and Interpretability", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 4, pp. 72–78, 2022. doi: 10.25046/aj070410

- Ayoub Benchabana, Mohamed-Khireddine Kholladi, Ramla Bensaci, Belal Khaldi, "A Supervised Building Detection Based on Shadow using Segmentation and Texture in High-Resolution Images", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 3, pp. 166–173, 2022. doi: 10.25046/aj070319

- Osaretin Eboya, Julia Binti Juremi, "iDRP Framework: An Intelligent Malware Exploration Framework for Big Data and Internet of Things (IoT) Ecosystem", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 5, pp. 185–202, 2021. doi: 10.25046/aj060521

- Arwa Alghamdi, Graham Healy, Hoda Abdelhafez, "Machine Learning Algorithms for Real Time Blind Audio Source Separation with Natural Language Detection", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 5, pp. 125–140, 2021. doi: 10.25046/aj060515

- Baida Ouafae, Louzar Oumaima, Ramdi Mariam, Lyhyaoui Abdelouahid, "Survey on Novelty Detection using Machine Learning Techniques", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 5, pp. 73–82, 2021. doi: 10.25046/aj060510

- Radwan Qasrawi, Stephanny VicunaPolo, Diala Abu Al-Halawa, Sameh Hallaq, Ziad Abdeen, "Predicting School Children Academic Performance Using Machine Learning Techniques", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 5, pp. 08–15, 2021. doi: 10.25046/aj060502

- Zhiyuan Chen, Howe Seng Goh, Kai Ling Sin, Kelly Lim, Nicole Ka Hei Chung, Xin Yu Liew, "Automated Agriculture Commodity Price Prediction System with Machine Learning Techniques", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 4, pp. 376–384, 2021. doi: 10.25046/aj060442

- Hathairat Ketmaneechairat, Maleerat Maliyaem, Chalermpong Intarat, "Kamphaeng Saen Beef Cattle Identification Approach using Muzzle Print Image", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 4, pp. 110–122, 2021. doi: 10.25046/aj060413

- Md Mahmudul Hasan, Nafiul Hasan, Dil Afroz, Ferdaus Anam Jibon, Md. Arman Hossen, Md. Shahrier Parvage, Jakaria Sulaiman Aongkon, "Electroencephalogram Based Medical Biometrics using Machine Learning: Assessment of Different Color Stimuli", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 3, pp. 27–34, 2021. doi: 10.25046/aj060304

- Dominik Štursa, Daniel Honc, Petr Doležel, "Efficient 2D Detection and Positioning of Complex Objects for Robotic Manipulation Using Fully Convolutional Neural Network", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 915–920, 2021. doi: 10.25046/aj0602104

- Md Mahmudul Hasan, Nafiul Hasan, Mohammed Saud A Alsubaie, "Development of an EEG Controlled Wheelchair Using Color Stimuli: A Machine Learning Based Approach", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 754–762, 2021. doi: 10.25046/aj060287

- Antoni Wibowo, Inten Yasmina, Antoni Wibowo, "Food Price Prediction Using Time Series Linear Ridge Regression with The Best Damping Factor", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 694–698, 2021. doi: 10.25046/aj060280

- Javier E. Sánchez-Galán, Fatima Rangel Barranco, Jorge Serrano Reyes, Evelyn I. Quirós-McIntire, José Ulises Jiménez, José R. Fábrega, "Using Supervised Classification Methods for the Analysis of Multi-spectral Signatures of Rice Varieties in Panama", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 552–558, 2021. doi: 10.25046/aj060262

- Phillip Blunt, Bertram Haskins, "A Model for the Application of Automatic Speech Recognition for Generating Lesson Summaries", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 526–540, 2021. doi: 10.25046/aj060260

- Sebastianus Bara Primananda, Sani Muhamad Isa, "Forecasting Gold Price in Rupiah using Multivariate Analysis with LSTM and GRU Neural Networks", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 245–253, 2021. doi: 10.25046/aj060227

- Byeongwoo Kim, Jongkyu Lee, "Fault Diagnosis and Noise Robustness Comparison of Rotating Machinery using CWT and CNN", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 1279–1285, 2021. doi: 10.25046/aj0601146

- Md Mahmudul Hasan, Nafiul Hasan, Mohammed Saud A Alsubaie, Md Mostafizur Rahman Komol, "Diagnosis of Tobacco Addiction using Medical Signal: An EEG-based Time-Frequency Domain Analysis Using Machine Learning", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 842–849, 2021. doi: 10.25046/aj060193

- Reem Bayari, Ameur Bensefia, "Text Mining Techniques for Cyberbullying Detection: State of the Art", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 783–790, 2021. doi: 10.25046/aj060187

- Carlos López-Bermeo, Mauricio González-Palacio, Lina Sepúlveda-Cano, Rubén Montoya-Ramírez, César Hidalgo-Montoya, "Comparison of Machine Learning Parametric and Non-Parametric Techniques for Determining Soil Moisture: Case Study at Las Palmas Andean Basin", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 636–650, 2021. doi: 10.25046/aj060170

- Ndiatenda Ndou, Ritesh Ajoodha, Ashwini Jadhav, "A Case Study to Enhance Student Support Initiatives Through Forecasting Student Success in Higher-Education", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 230–241, 2021. doi: 10.25046/aj060126

- Lonia Masangu, Ashwini Jadhav, Ritesh Ajoodha, "Predicting Student Academic Performance Using Data Mining Techniques", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 153–163, 2021. doi: 10.25046/aj060117

- Sara Ftaimi, Tomader Mazri, "Handling Priority Data in Smart Transportation System by using Support Vector Machine Algorithm", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 1422–1427, 2020. doi: 10.25046/aj0506172

- Othmane Rahmaoui, Kamal Souali, Mohammed Ouzzif, "Towards a Documents Processing Tool using Traceability Information Retrieval and Content Recognition Through Machine Learning in a Big Data Context", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 1267–1277, 2020. doi: 10.25046/aj0506151

- Puttakul Sakul-Ung, Amornvit Vatcharaphrueksadee, Pitiporn Ruchanawet, Kanin Kearpimy, Hathairat Ketmaneechairat, Maleerat Maliyaem, "Overmind: A Collaborative Decentralized Machine Learning Framework", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 280–289, 2020. doi: 10.25046/aj050634

- Pamela Zontone, Antonio Affanni, Riccardo Bernardini, Leonida Del Linz, Alessandro Piras, Roberto Rinaldo, "Supervised Learning Techniques for Stress Detection in Car Drivers", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 22–29, 2020. doi: 10.25046/aj050603

- Kodai Kitagawa, Koji Matsumoto, Kensuke Iwanaga, Siti Anom Ahmad, Takayuki Nagasaki, Sota Nakano, Mitsumasa Hida, Shogo Okamatsu, Chikamune Wada, "Posture Recognition Method for Caregivers during Postural Change of a Patient on a Bed using Wearable Sensors", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 1093–1098, 2020. doi: 10.25046/aj0505133

- Khalid A. AlAfandy, Hicham Omara, Mohamed Lazaar, Mohammed Al Achhab, "Using Classic Networks for Classifying Remote Sensing Images: Comparative Study", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 770–780, 2020. doi: 10.25046/aj050594

- Khalid A. AlAfandy, Hicham, Mohamed Lazaar, Mohammed Al Achhab, "Investment of Classic Deep CNNs and SVM for Classifying Remote Sensing Images", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 652–659, 2020. doi: 10.25046/aj050580

- Rajesh Kumar, Geetha S, "Malware Classification Using XGboost-Gradient Boosted Decision Tree", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 536–549, 2020. doi: 10.25046/aj050566

- Nghia Duong-Trung, Nga Quynh Thi Tang, Xuan Son Ha, "Interpretation of Machine Learning Models for Medical Diagnosis", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 469–477, 2020. doi: 10.25046/aj050558

- Oumaima Terrada, Soufiane Hamida, Bouchaib Cherradi, Abdelhadi Raihani, Omar Bouattane, "Supervised Machine Learning Based Medical Diagnosis Support System for Prediction of Patients with Heart Disease", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 269–277, 2020. doi: 10.25046/aj050533

- Haytham Azmi, "FPGA Acceleration of Tree-based Learning Algorithms", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 237–244, 2020. doi: 10.25046/aj050529

- Hicham Moujahid, Bouchaib Cherradi, Oussama El Gannour, Lhoussain Bahatti, Oumaima Terrada, Soufiane Hamida, "Convolutional Neural Network Based Classification of Patients with Pneumonia using X-ray Lung Images", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 167–175, 2020. doi: 10.25046/aj050522

- Pham Minh Nam, Phu Tran Tin, "Analysis of Security-Reliability Trade-off for Multi-hop Cognitive Relaying Protocol with TAS/SC Technique", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 54–62, 2020. doi: 10.25046/aj050508

- Young-Jin Park, Hui-Sup Cho, "A Method for Detecting Human Presence and Movement Using Impulse Radar", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 770–775, 2020. doi: 10.25046/aj050491

- Anouar Bachar, Noureddine El Makhfi, Omar EL Bannay, "Machine Learning for Network Intrusion Detection Based on SVM Binary Classification Model", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 638–644, 2020. doi: 10.25046/aj050476

- Adonis Santos, Patricia Angela Abu, Carlos Oppus, Rosula Reyes, "Real-Time Traffic Sign Detection and Recognition System for Assistive Driving", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 600–611, 2020. doi: 10.25046/aj050471

- Amar Choudhary, Deependra Pandey, Saurabh Bhardwaj, "Overview of Solar Radiation Estimation Techniques with Development of Solar Radiation Model Using Artificial Neural Network", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 589–593, 2020. doi: 10.25046/aj050469

- Maroua Abdellaoui, Dounia Daghouj, Mohammed Fattah, Younes Balboul, Said Mazer, Moulhime El Bekkali, "Artificial Intelligence Approach for Target Classification: A State of the Art", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 445–456, 2020. doi: 10.25046/aj050453

- Shahab Pasha, Jan Lundgren, Christian Ritz, Yuexian Zou, "Distributed Microphone Arrays, Emerging Speech and Audio Signal Processing Platforms: A Review", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 331–343, 2020. doi: 10.25046/aj050439

- Ilias Kalathas, Michail Papoutsidakis, Chistos Drosos, "Optimization of the Procedures for Checking the Functionality of the Greek Railways: Data Mining and Machine Learning Approach to Predict Passenger Train Immobilization", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 287–295, 2020. doi: 10.25046/aj050435

- Yosaphat Catur Widiyono, Sani Muhamad Isa, "Utilization of Data Mining to Predict Non-Performing Loan", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 252–256, 2020. doi: 10.25046/aj050431

- Hai Thanh Nguyen, Nhi Yen Kim Phan, Huong Hoang Luong, Trung Phuoc Le, Nghi Cong Tran, "Efficient Discretization Approaches for Machine Learning Techniques to Improve Disease Classification on Gut Microbiome Composition Data", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 3, pp. 547–556, 2020. doi: 10.25046/aj050368

- Ruba Obiedat, "Risk Management: The Case of Intrusion Detection using Data Mining Techniques", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 3, pp. 529–535, 2020. doi: 10.25046/aj050365

- Krina B. Gabani, Mayuri A. Mehta, Stephanie Noronha, "Racial Categorization Methods: A Survey", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 3, pp. 388–401, 2020. doi: 10.25046/aj050350

- Dennis Luqman, Sani Muhamad Isa, "Machine Learning Model to Identify the Optimum Database Query Execution Platform on GPU Assisted Database", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 3, pp. 214–225, 2020. doi: 10.25046/aj050328

- Gillala Rekha, Shaveta Malik, Amit Kumar Tyagi, Meghna Manoj Nair, "Intrusion Detection in Cyber Security: Role of Machine Learning and Data Mining in Cyber Security", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 3, pp. 72–81, 2020. doi: 10.25046/aj050310

- Ahmed EL Orche, Mohamed Bahaj, "Approach to Combine an Ontology-Based on Payment System with Neural Network for Transaction Fraud Detection", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 2, pp. 551–560, 2020. doi: 10.25046/aj050269

- Bokyoon Na, Geoffrey C Fox, "Object Classifications by Image Super-Resolution Preprocessing for Convolutional Neural Networks", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 2, pp. 476–483, 2020. doi: 10.25046/aj050261

- Johannes Linden, Xutao Wang, Stefan Forsstrom, Tingting Zhang, "Productify News Article Classification Model with Sagemaker", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 2, pp. 13–18, 2020. doi: 10.25046/aj050202

- Michael Wenceslaus Putong, Suharjito, "Classification Model of Contact Center Customers Emails Using Machine Learning", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 1, pp. 174–182, 2020. doi: 10.25046/aj050123

- Rehan Ullah Khan, Ali Mustafa Qamar, Mohammed Hadwan, "Quranic Reciter Recognition: A Machine Learning Approach", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 6, pp. 173–176, 2019. doi: 10.25046/aj040621

- Mehdi Guessous, Lahbib Zenkouar, "An ML-optimized dRRM Solution for IEEE 802.11 Enterprise Wlan Networks", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 6, pp. 19–31, 2019. doi: 10.25046/aj040603

- Toshiyasu Kato, Yuki Terawaki, Yasushi Kodama, Teruhiko Unoki, Yasushi Kambayashi, "Estimating Academic results from Trainees’ Activities in Programming Exercises Using Four Types of Machine Learning", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 5, pp. 321–326, 2019. doi: 10.25046/aj040541

- Nindhia Hutagaol, Suharjito, "Predictive Modelling of Student Dropout Using Ensemble Classifier Method in Higher Education", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 4, pp. 206–211, 2019. doi: 10.25046/aj040425

- Fernando Hernández, Roberto Vega, Freddy Tapia, Derlin Morocho, Walter Fuertes, "Early Detection of Alzheimer’s Using Digital Image Processing Through Iridology, An Alternative Method", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 3, pp. 126–137, 2019. doi: 10.25046/aj040317

- Abba Suganda Girsang, Andi Setiadi Manalu, Ko-Wei Huang, "Feature Selection for Musical Genre Classification Using a Genetic Algorithm", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 2, pp. 162–169, 2019. doi: 10.25046/aj040221

- Imtiaz Parvez, Arif I. Sarwat, "A Spectrum Sharing based Metering Infrastructure for Smart Grid Utilizing LTE and WiFi", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 2, pp. 70–77, 2019. doi: 10.25046/aj040209

- Konstantin Mironov, Ruslan Gayanov, Dmiriy Kurennov, "Observing and Forecasting the Trajectory of the Thrown Body with use of Genetic Programming", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 1, pp. 248–257, 2019. doi: 10.25046/aj040124

- Bok Gyu Han, Hyeon Seok Yang, Ho Gyeong Lee, Young Shik Moon, "Low Contrast Image Enhancement Using Convolutional Neural Network with Simple Reflection Model", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 1, pp. 159–164, 2019. doi: 10.25046/aj040115

- Zheng Xie, Chaitanya Gadepalli, Farideh Jalalinajafabadi, Barry M.G. Cheetham, Jarrod J. Homer, "Machine Learning Applied to GRBAS Voice Quality Assessment", Advances in Science, Technology and Engineering Systems Journal, vol. 3, no. 6, pp. 329–338, 2018. doi: 10.25046/aj030641

- Richard Osei Agjei, Emmanuel Awuni Kolog, Daniel Dei, Juliet Yayra Tengey, "Emotional Impact of Suicide on Active Witnesses: Predicting with Machine Learning", Advances in Science, Technology and Engineering Systems Journal, vol. 3, no. 5, pp. 501–509, 2018. doi: 10.25046/aj030557

- Sudipta Saha, Aninda Saha, Zubayr Khalid, Pritam Paul, Shuvam Biswas, "A Machine Learning Framework Using Distinctive Feature Extraction for Hand Gesture Recognition", Advances in Science, Technology and Engineering Systems Journal, vol. 3, no. 5, pp. 72–81, 2018. doi: 10.25046/aj030510

- Charles Frank, Asmail Habach, Raed Seetan, Abdullah Wahbeh, "Predicting Smoking Status Using Machine Learning Algorithms and Statistical Analysis", Advances in Science, Technology and Engineering Systems Journal, vol. 3, no. 2, pp. 184–189, 2018. doi: 10.25046/aj030221

- Sehla Loussaief, Afef Abdelkrim, "Machine Learning framework for image classification", Advances in Science, Technology and Engineering Systems Journal, vol. 3, no. 1, pp. 1–10, 2018. doi: 10.25046/aj030101

- Ruijian Zhang, Deren Li, "Applying Machine Learning and High Performance Computing to Water Quality Assessment and Prediction", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 6, pp. 285–289, 2017. doi: 10.25046/aj020635

- Batoul Haidar, Maroun Chamoun, Ahmed Serhrouchni, "A Multilingual System for Cyberbullying Detection: Arabic Content Detection using Machine Learning", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 6, pp. 275–284, 2017. doi: 10.25046/aj020634

- Yuksel Arslan, Abdussamet Tanıs, Huseyin Canbolat, "A Relational Database Model and Tools for Environmental Sound Recognition", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 6, pp. 145–150, 2017. doi: 10.25046/aj020618

- Anwar Mohamed Fanan, Nick Riley, Meftah Mehdawi, "Design of Cognitive Radio Database using Terrain Maps and Validated Propagation Models", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 6, pp. 13–19, 2017. doi: 10.25046/aj020602

- Loretta Henderson Cheeks, Ashraf Gaffar, Mable Johnson Moore, "Modeling Double Subjectivity for Gaining Programmable Insights: Framing the Case of Uber", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 3, pp. 1677–1692, 2017. doi: 10.25046/aj0203209

- Moses Ekpenyong, Daniel Asuquo, Samuel Robinson, Imeh Umoren, Etebong Isong, "Soft Handoff Evaluation and Efficient Access Network Selection in Next Generation Cellular Systems", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 3, pp. 1616–1625, 2017. doi: 10.25046/aj0203201

- Rogerio Gomes Lopes, Marcelo Ladeira, Rommel Novaes Carvalho, "Use of machine learning techniques in the prediction of credit recovery", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 3, pp. 1432–1442, 2017. doi: 10.25046/aj0203179

- Daniel Fraunholz, Marc Zimmermann, Hans Dieter Schotten, "Towards Deployment Strategies for Deception Systems", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 3, pp. 1272–1279, 2017. doi: 10.25046/aj0203161

- Arsim Susuri, Mentor Hamiti, Agni Dika, "Detection of Vandalism in Wikipedia using Metadata Features – Implementation in Simple English and Albanian sections", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 4, pp. 1–7, 2017. doi: 10.25046/aj020401

- Adewale Opeoluwa Ogunde, Ajibola Rasaq Olanbo, "A Web-Based Decision Support System for Evaluating Soil Suitability for Cassava Cultivation", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 1, pp. 42–50, 2017. doi: 10.25046/aj020105

- Arsim Susuri, Mentor Hamiti, Agni Dika, "The Class Imbalance Problem in the Machine Learning Based Detection of Vandalism in Wikipedia across Languages", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 1, pp. 16–22, 2016. doi: 10.25046/aj020103

1.Introduction

This paper is an extension of the work “Multiple Machine Learning Algorithms Comparison for Modulation Type Classification for Efficient Cognitive Radio” originally presented in Milcom 2019 [1].

The global market of mobile devices and services actively using the electromagnetic spectrum is continuously growing. Traditionally the electromagnetic spectrum utilization has been performed using a robust static approach developed almost a century ago: it rations access to the spectrum in exchange for the guarantee of interference-free communication spectrum is divided into the rigid, exclusively licensed bands, allocated over large, geographically defined regions. In conditions when some of these license bands are being nearly unused, while the others are overwhelmed, the problem of spectrum scarcity arises [2]. To cope with the increasingly populated spectrum, the electromagnetic spectrum utilization policies have been reformed in recent years with the objective to allow the unlicensed secondary users to access licensed bands without causing interference to the licensed primary users [3].

Blind waveform estimation techniques can be used with an intelligent transceiver, yielding an increase in the transmission efficiency by reducing the overhead. Waveform information is critical to implement spectrum sharing scenarios like frequency hopping spread spectrum (FHSS) and dynamic spectrum access (DSA). Modulation type classification is a part of the waveform estimation together with the central frequency and symbol rate estimation. Multiple artificial intelligence algorithms have been successfully applied to solve the modulation classification problems. The availability of abundant data, breakthroughs of algorithms, and the advancements in hardware development during recent years have been driving forward vibrant development of deep learning [4]. Modulation classification using convolutional NN has been presented in [5] and [6]. Mathworks deployed a hands-on practical implementation of deep learning-based modulation classification in [7]. In [8], the authors have demonstrated successful launch of the optimized AlexNet on ZYNQ7045 FPGA: the inference execution times of CNNs in low density FPGAs has been improved using fixed-point arithmetic, zero-skipping and weight pruning. However, in this work the choice has been made to use less computationally demanding supervised ML algorithms. Conventional artificial intelligence (AI) algorithms like machine learning (ML) require preprocessing of the input signal and feature extraction. Received signal features used for the modulation classification could be classified into spectral-based and cyclostationary features. The spectral-based features exploit the unique spectral characters of different signal modulations in three physical aspects of the signal: the amplitude, phase, and frequency. The authors have summarized some of the well-recognized spectral features designed for modulation classification including the following and suggested a decision tree classification [9]. The authors have applied instantaneous amplitude, instantaneous phase, and spectrum symmetry together with the set of new features from both spectral and time domain including linear predictive coefficients, adaptive component weighting, zero-crossing ratio, linear frequency bank spectral coefficient for the classification of commonly used digital modulations including ASK, FSK, MSK, BPSK, QPSK, PSK, FSK4 and QAM-16 [10]. Gardner pioneered the area of cyclostationary signal analysis [11]. Gardner and Spooner first implemented cyclostationary analysis for modulation classification problems in [12].

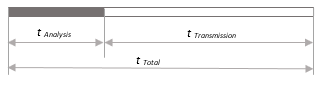

Figure 1: Optimizing sensing and transmission times.

Figure 1: Optimizing sensing and transmission times.

The aim of this work is to determine the optimal modulation classification algorithm and suggest the subset of the strongest and robust features derived from the received signal that could be used to blindly classify the modulation type in our hardware application: a software-defined radio-based network consisting of multiple digital cognitive radio nodes. SDR-based nodes are operating in the frequency band from 70 MHz to 6 GHz with up to 56 MHz of instantaneous bandwidth are used as the hardware platform for the cognitive functionality deployment with FHSS capabilities. Available computational resources are Dual-core ARM Cortex A9 CPU, 2×512 MB of DDR3L RAM, and 512 MB of QSPI flash memory. The operation system is Embedded Linux. The radio part is based on Analog Devices AD9364 radio transceiver and Xilinx’ Zynq-7020 FPGA. It is supporting multiple digital modulations including both linear: QPSK, BPSK, 8PSK, 16PSK, and non-linear: 2FSK. Symbol rate could be also adjusted between 10 KSymbols/s and 1 MSymbol/s to generate the cognitive waveform. Our target application predefines most of the boundary conditions and operational requirements such as required decision-making speed and computational resources available. In this work time required for radio scene analysis tAnalysis is defined as a sum of time required for radio scene observation tObservation and processing tProcessing for the received signal on the receiver front end. Time allocated for the active data transmission is data transmission time, tTransmission and total time is the sum of observation and transmission time as illustrated by Figure 8. Allocating the sensing time and transmission time at the MAC layer is involving a tradeoff between ensuring the PUs user’s QoS requirements as opposed to maximizing the data throughput. To meet real-time operation requirements on the target hardware the following real-time performance characteristics must be met by the proposed algorithms. The maximum time available for radio-scene environment observation is tobservation=500×10-6 seconds. Our application is a time-slotted communication system, where 500 microseconds corresponds to one-time slot, also maximum processing time has been selected likewise tprocessing=500×10-6 seconds, which requires the minimum classification speed of 2000 objects per second. Modulation classification is required to be performed for SNR values above the demodulation threshold of 12 dB, which corresponds to bit-error-rate BER=10-8 and BER=3.4×10-5 for BPSK and 2FSK, respectively.

The frequency of the radio scene environment sensing (how often sensing should be performed by the cognitive radio) and sensing time (the duration the sensing is performed) are key parameters affecting the throughput. While higher sensing times ensure more accurate radio scene environment sensing, this may result in a comparatively smaller duration for actual data transmission during the total time for which the spectrum may be used, thereby lowering the throughput [13]. To achieve the optimum between sensing time and throughput, for example, in IEEE 802.22 two-stage sensing (TSS) mechanism is implemented that includes: fast sensing, done at the rate of 1 ms/channel, and fine sensing performed on-demand. In this work, we have evaluated the possibility to perform the classification based on instantaneous values to shorten the spectrum sensing time and reduce computational costs. To improve classification accuracy, classification based on spectral-based statistical features derived from the wavelet transform of the received signal observed during the certain observation time frame has been proposed. In this study observation times of 100 and 500 microseconds have been used. The lowest observation time has been selected 100 microseconds to accommodate the lowest symbol rate of 10 KSymbols/second supported in our target application for cognitive waveform generation.

Figure 2: Modulation classification using a) instantaneous values of the time domain signal input; b) time series statistics input.

Figure 2: Modulation classification using a) instantaneous values of the time domain signal input; b) time series statistics input.

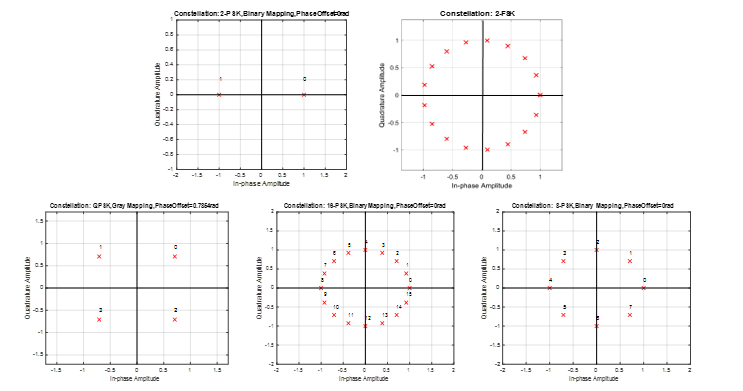

Figure 3: Constellation plots for studies modulation classes BPSK, 2FSK, QPSK, 8PSK, 16PSK.

Figure 3: Constellation plots for studies modulation classes BPSK, 2FSK, QPSK, 8PSK, 16PSK.

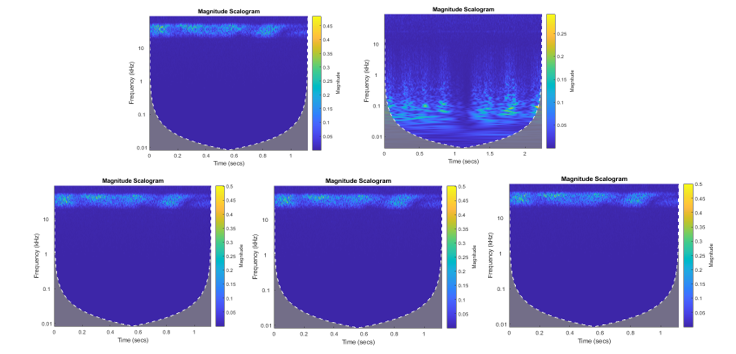

Figure 4: Scalograms obtained from Haar transform. wavelet coefficients for BPSK, 2FSK, QPSK, 8PSK, 16PSK. Observation time 500 microseconds signal.

Figure 4: Scalograms obtained from Haar transform. wavelet coefficients for BPSK, 2FSK, QPSK, 8PSK, 16PSK. Observation time 500 microseconds signal.

The highest: 500 microseconds that correspond to one time slot in our time-slotted target application. Wavelet transform has been used in this work for the feature extraction, since obtained wavelet coefficients could be reused also to detect the vacant frequency channels and estimate the symbol rate, what could potentially save time and computational resources spent on radio scene analysis. Cyclostationary based algorithms have also been named in the literature as a powerful algorithm for modulation type and symbol rate estimation and vacant frequency channels detection. However, they are prone to cyclostationary noise and require longer observation time and is relatively computationally complex [14], and therefore this work has been focused on wavelet-based algorithms. Multiple supervised machine learning classifiers have been tested to perform classification based on instantaneous values and time-series statistics. The performance of tested classifiers has been evaluated in terms of classification accuracy and speed.

2. Methodology

Both feature extraction and classification algorithms applied in the literature to solve the modulation classification problem has been studied and evaluated. Primary, modulation type classification has been performed based on the input values of the instantaneous values of the in-phase and quadrature components of the time domain digital signal. Classification results have been satisfactory for the case of the binary classification between 2FSK and BPSK modulations. However, once the classification task has been extended to the higher-order modulations, the suggested classification approach has shown unacceptably low classification accuracy of 78.1 %. The highest classification accuracy of 84.9 % has been observed in the validation phase for classifying five modulations into two classes: linear and non-linear modulations using a fine SVM classifier.

Therefore, to improve the classification accuracy for higher-order modulations we have looked at multiple statistical features extracted from the time series containing the received signal observed during observation times of 100 and 500 microseconds. The following criteria have been considered when selecting classification features and feature extraction algorithm:

- Robustness to noise; 2. Computational complexity; 3. Possibility to reuse preprocessed data for solving other radio scene environments observation tasks such as vacant bands detection and symbol rate estimation. Nine spectral-based statistical features derived from Haar wavelet transform coefficients calculated from the frequency domain signal observed during the selected observation time. The Haar transform has been selected as the simplest and less computationally demanding of all wavelet families. From the vector of wavelet coefficients, nine statistical features have been derived. To determine the strongest features to be used as inputs to ML classifier fractional factorial design analysis has been performed.

In statistics, a full factorial experiment is an experiment whose design consists of two or more factors, each with discrete possible values or “levels”, and whose experimental units take on all possible combinations of these levels across all studied factors. Fractional factorial designs are experimental designs consisting of a carefully chosen subset or fraction of the experimental runs of a full factorial design. Significance is quantitively measured and referred to as the main effect [15]. A fractional factorial design approach has been used to analyze the main effects of every of nine statistical features and observation time on two studied response parameters: classification speed and accuracy in conditions of noise represented by two levels: AWGN and AWGN and multipath Rician channel model. Two levels have been applied and studied: first-level “-1” corresponds to not including the classification feature into classification and second level “+1” corresponds to including the studied feature into classification input. Observation time has also been varied according to two levels: first “-1“corresponds to 100 microseconds and the second level “+1” corresponds to 500 microseconds observation time. MATLAB and Simulink environment has been used for training and validation data sets generation including the modulator and propagation channel models, MathWorks classification learner ML Classification Learner tool has been used to train and validate classifiers. Twenty-four supervised ML classification algorithms have been studied and evaluated in terms of classification accuracy and classification speed for two studied channel models: AWGN and Rician multipath channels with AWGN.

3. Input data and feature extraction

To suggest optimal feature extraction and classification algorithms for our target radio application seven artificial data sets, consisting of signal samples modulated into 2FSK, BPSK, QPSK, 8PSK, 16PSK have been generated. Data sets are described in detail in Section 4. Figure 3 presents the constellation plots of five studied modulation types.

3.1. Classification using instantaneous values

The classification performed using instantaneous values of the time domain signal and SNR as classification inputs does not require any feature extraction: the raw values of the in-phase and quadrature component and SNR (recorded by transceiver as RSSI) have been used directly as an input to the classifier.

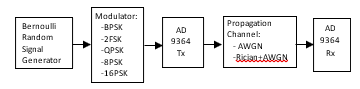

Figure 5: Generalized model used for data set generation.

Figure 5: Generalized model used for data set generation.

Table 1: Spectral-based features derived from time series used for modulation type classification

| N | Feature | Factor | Description |

| 1 | Mean | A | Mean value of the wavelet coefficients obtained from single-level discrete wavelet transform of the frequency domain signal. |

| 2 | Standard Deviation | B | Standard deviation of the wavelet coefficients obtained from single-level discrete wavelet transform of the frequency domain signal. |

| 3 | Kurtosis | C | Kurtosis is a statistical measure that defines how heavily the tails of a distribution differ from the tails of a normal distribution calculated from the wavelet coefficients obtained from single-level discrete wavelet transform of the frequency domain signal [16]. |

| 4 | Skewness | D | Skewness is a measure of the asymmetry of the probability distribution of a real-valued random variable about its mean. Calculated from the wavelet coefficients obtained from single-level discrete wavelet transform of the frequency domain signal [16]. |

| 5 | Median absolute deviation | E | It is a measure of the statistical dispersion: a robust measure of the variability of a univariate sample of quantitative data. It is more resilient to outliers in a data set than the standard deviation. It is calculated from the wavelet coefficients obtained from single-level discrete wavelet transform of the frequency domain signal [17]. |

| 6 | Root-mean-square level | F | The RMS value of a set of values (or a continuous-time waveform) is the square root of the arithmetic mean of the squares of the values, or the square of the function that defines the continuous waveform [18]. |

| 7 | Zero crossing rate | G | It is the rate of sign-changes along a signal: the rate at which the signal changes from positive to zero to negative or from negative to zero to positive [19]. |

| 8 | Interquartile range | H | The interquartile range (IQR) is a measure of variability: calculated based on dividing a data set into quartiles [20]. |

| 9 | 75th Percentile | I | The percentile rank of a score is the percentage of scores in its frequency distribution that are equal to or lower than it [21]. |

| 10 | SNR | J | Signal-to-Noise ratio corresponds to RSSI value for the received signal measured by transceiver. |

Table 2: Classifiers performance reached in validation. Classification between FSK and BPSK modulations. AWGN channel (SNR ranging from 1 to30 dB). Data set 1

| Classifier | With PCA

(1 feature of 3) |

No PCA

(3 features of 3) |

No PCA

(2 features of 3, No SNR) |

|||

| Average Accuracy, % | Prediction speed,

Objects/s |

Average Accuracy, % | Prediction speed,

Objects/s |

Average Accuracy, % | Prediction speed,

Objects/s |

|

| Decision Trees: Fine | 52.1 | 1600 000 | 86.0 | 1 200 000 | 84.2 | 2 000 000 |

| Decision Trees: Medium | 51.3 | 1200 000 | 85.2 | 1 000 000 | 83.3 | 2 100 000 |

| Decision Trees: Coarse | 50.7 | 1300 000 | 82.2 | 2 300 000 | 82.1 | 2 100 000 |

| KNN: Fine | 55.5 | 770 000 | 82.3 | 310 000 | 78.9 | 300 000 |

| KNN: Medium | 57.9 | 220 000 | 86.1 | 110 000 | 84.6 | 110 000 |

| KNN: Coarse | 61.5 | 55 000 | 86.8 | 28 000 | 85.5 | 27 000 |

| KNN: Cosine | 50.0 | 300 | 83.7 | 290 | 78.1 | 320 |

| KNN: Cubic | 57.9 | 150 000 | 86.1 | 29 000 | 84.6 | 46 000 |

| KNN: Weighted | 56.0 | 220 000 | 84.2 | 130 000 | 82.0 | 120 000 |

| SVM: linear | 50.1 | 47 000 | 55.3 | 3000 | 63.8 | 120 000 |

| SVM: Quadratic | 50.0 | 70 000 | 54.7 | 2300 | 42.8 | 92 000 |

| SVM: Cubic | 50.0 | 160 000 | 58.8 | 34 000 | 62.8 | 40 000 |

| SVM: Fine Gaussian | 49.5 | 230 | 86.9 | 790 | 85.4 | 970 |

| SVM: Medium Gaussian | 49.8 | 190 | 86.5 | 610 | 84.3 | 750 |

| SVM: Coarse Gaussian | 49.8 | 240 | 49.8 | 240 | 81.4 | 600 |

| Ensemble Boosted Trees | 51.3 | 110 000 | 86.3 | 120 000 | 85.0 | 120 000 |

| Ensemble Bagged Trees | 55.5 | 19 000 | 85.4 | 44 000 | 83.1 | 33 000 |

| Ensemble Subspace Discriminant | 49.8 | 100 000 | 50.3 | 86 000 | 50.5 | 120 000 |

| Ensemble Subspace KNN | 55.5 | 34 000 | 80.5 | 6100 | 69.5 | 17 000 |

| Ensemble RUS Boosted Trees | 51.3 | 120 000 | 85.1 | 150 000 | 83.3 | 130 000 |

| Logistic Regression | 49.8 | 1600 000 | 50.9 | 1 200 000 | 50.5 | 3 200 000 |

| Linear Discriminant | 49.8 | 1200 000 | 50.3 | 1 900 000 | 50.5 | 1 300000 |

3.2. Classification using time-series statistics.

Nine spectral-based statistical features have been derived from the received signal observed during the observation time. Primary fast Fourier transform has been applied to switch to the frequency domain. Then discrete wavelet transform has been applied to the frequency domain signal using Haar wavelet. This transform cross-multiplies a function against the Haar wavelet with various shifts and stretches. Figure 4 presents scalograms plots for studied modulations obtained by Haar wavelet transform. Then from the derived wavelet coefficients, nine spectral-based statistical features summarized in Table 1 have been calculated.

4. Data set

Seven data sets used for training and validation have been generated and are described briefly below. Three labeled artificial data sets consisting of three features: instantaneous values of in-phase and quadrature components of the time domain signal and SNR and four data sets consisting of nine statistical features extracted from time-series recorded during 100 and 500 microseconds and SNR for two channel models: AWGN and Rician channel with AWGN. Figure 5 describes the generalized model used to generate data sets.

Data Set 1: Binary classification between BPSK and 2FSK using instantaneous values. Data set for training and validation has been generated using a virtual model presented in Figure 5. The random input signal has been generated by Bernoulli binary random signal generator and modulated into BPSK or FSK. The modulated signal is transmitted by transmitter AD9364 TX. AWGN channel model has been selected to emulate the propagation environment, where SNR ranges from 30 to 1 dB. Data set consisting of 408000 rows and 4 columns, where two first columns correspond to the instantaneous values of the signal in the time domain: in-phase and quadrature components, the third column corresponds to SNR value and the fourth column represents the data label. 80 % of the data set has been used for training the classifiers and 20 % has been used for validation.

Data Set 2: Classification of BPSK, 2FSK, QPSK, 8PSK, 16PSK into two classes linear and nonlinear using instantaneous values. Data set of total size 910000 rows by 4 columns consisting of BPSK, 2FSK, QPSK, 8PSK, 16PSK has been generated in a similar way as Data Set 1: two first columns are corresponding to the instantaneous values of the signal in the time domain: in-phase and quadrature components, the third column corresponding to SNR value and the fourth column represents the data label corresponding to two classes: linear or non-linear modulation. Also, 80 % of the generated data set has been used for training the classifiers and 20 % of the generated data set has been used for validation of the classifiers.

Data Set 3: Classification of BPSK, 2FSK, QPSK, 8PSK, 16PSK into five classes using instantaneous values. Data set 3 consisting of five modulations described above has been used to train classifiers to classify all five modulation types. In this data set only the fourth column corresponding to the label has been changed to accommodate five output classes. Also, 80 % of the data set has been used for training of the classifiers and 20% for validation.

Table 3: Classifiers performance reached in validation. Classification between linear and non-linear modulations. Non-Linear: FSK, linear: BPSK, QPS, 8PSK, 16PSK modulations. AWGN channel (SNR ranging from 1 to 30dB). Data set 2.

| Classifier | Average Accuracy, (%) | Prediction speed, (Objects/s) |

| Decision Trees: Fine | 80.5 | 1 200 000 |

| Optimized Trees | 83.0 | 3 400 000 |

| KNN: Fine | 77.1 | 310 000 |

| KNN: Medium | 82.6 | 110 000 |

| SVM: Fine Gaussian | 84.9 | 790 |

| Ensemble Boosted Trees | 80.9 | 120 000 |

| Ensemble Bagged Trees | 81.8 | 44 000 |

| Ensemble Subspace KNN | 80.5 | 6100 |

| Ensemble RUS Boosted Trees | 78.1 | 150 000 |

Data Set 4: Classification of BPSK, 2FSK, QPSK, 8PSK, 16PSK into five classes using statistical features derived from time series observed during 500 microseconds for AWGN channel.

Data set 4 consists of 1500 samples (300 signal samples for every modulation type) resulting in a matrix of 1500 rows by 11 columns where the first nine columns correspond to statistical features column 10 corresponds to SNR and the last column corresponds to data class label. Statistical features for every signal sample have been derived from the received signal observed during 500 microseconds in conditions of the AWGN propagation channel (SNR ranging from 1 dB to 30 dB). Also, 80 % of the data set has been used for training, and 20 % for validation.

Data Set 5: Classification of BPSK, 2FSK, QPSK, 8PSK, 16PSK into five classes using statistical features derived from time series observed during 100 microseconds. AWGN channel. Data set 5 consisting of 1500 samples (300 signal samples for every modulation type) resulting in a matrix of 1500 rows by 11 columns where the first nine columns correspond to statistical features, column 10 corresponds to SNR and the last column corresponds to data class label. Statistical features for every signal sample have been derived from the received signal observed during 100 microseconds in conditions of AWGN propagation channel with

SNR ranging from 1 to 30 dB. Also, 80% of the data set has been used for training, and 20% for validation.

Data Set 6: Classification of BPSK, 2FSK, QPSK, 8PSK, 16PSK into five classes using statistical features derived from the received signal observed during 500 microseconds for Rician multipath channel. Data set 6 consisting of 1500 samples (300 signal samples for every modulation type) resulting in a matrix 1500 rows by 11 columns where the first nine columns correspond to statistical features, column 10 corresponds to SNR and the last column corresponds to data class label. Statistical features have been derived from time-domain signal observed during 500 microseconds in conditions of Rician multipath line-of-sight fading channel model with AWGN (SNR = 30). Three fading paths have been chosen with path delays selected for outdoor environments 0; 9×10-5 and 1.7×10-5. Path delay ranging 10-5 is suggested for the mountains area. Average path gains have been chosen [0 -2 -10]. The Rician K-factor has been selected K-factor = 4, it specifies the ratio of specular-to-diffuse power for a direct line-of-sight path, it is usually in the range [1, 10] and 0 corresponds to Rayleigh fading. Maximum Doppler shift has been chosen 4 dB, which corresponds to a signal from a moving pedestrian [22]. Also, 80% of the data set has been used for training the classifiers and 20 % for validation.

Data Set 7: Classification of BPSK, 2FSK, QPSK, 8PSK, 16PSK into five classes using statistical features derived from time series observed during 100 microseconds. Rician multipath channel. Data set 7 consisting of 1500 samples (300 signal samples for every modulation type) resulting in a matrix 1500 rows by 11 columns where the first nine columns correspond to statistical features column 10 corresponds to SNR and the last column corresponds to data class label. Statistical features for every signal sample have been derived according to the steps summarized in feature extraction from time-domain signal observed during 100 microseconds in conditions of Rician multipath channel model with AWGN with SNR = 30 dB. The properties of the Rician channel have been selected the same as in data set 6. 80% of the data set has been used to train classifiers, 20% for validation.

Table 4: Classifiers performance reached in validation. Classification between FSK, BPSK, QPSK, 8PSK and 16PSK modulations using statistical features derived from the wavelet transform of the time series recorded during 500 microseconds. AWGN (SNR ranging from 1 to 30 dB) channel. Data set 4.

| Classifier | With PCA

(1 feature of 10) |

No PCA

(10 features of 10) |

No PCA

(5 features of 10) |

No PCA

Mean (1 features of 10) |

||||

| Average Accuracy, % | Prediction speed,

Objects/s |

Average Accuracy, % | Prediction speed,

Objects/s |

Average Accuracy, % | Prediction speed,

Objects/s |

Average Accuracy, % | Prediction speed,

Objects/s |

|

| Decision Trees: Fine | 100 | 15000 | 100 | 21000 | 100 | 20000 | 100 | 12000 |

| Decision Trees: Medium | 89.3 | 13000 | 99.7 | 23000 | 90.6 | 21000 | 79.9 | 18000 |

| Decision Trees: Coarse | 82.9 | 15000 | 88.1 | 19000 | 68.9 | 23000 | 67.5 | 19000 |

| KNN: Fine | 100 | 22000 | 100 | 42000 | 100 | 48000 | 100 | 51000 |

| KNN: Medium | 98.1 | 22000 | 96.6 | 26000 | 98.2 | 38000 | 97.2 | 56000 |

| KNN: Coarse | 80.6 | 18000 | 61.1 | 13000 | 61.7 | 21000 | 69.1 | 36000 |

| KNN: Cosine | 38.3 | 18000 | 96.9 | 22000 | 97.2 | 33000 | 21.3 | 38000 |

| KNN: Cubic | 98.1 | 17000 | 96.6 | 7800 | 98.5 | 20000 | 97.2 | 52000 |

| KNN: Weighted | 100 | 18000 | 100 | 36000 | 100 | 52000 | 100 | 52000 |

| SVM: linear | 57.0 | 15000 | 93.8 | 18000 | 75.1 | 21000 | 63.8 | 28000 |

| SVM: Quadratic | 51.2 | 20000 | 100 | 17000 | 98.0 | 17000 | 51.8 | 22000 |

| SVM: Cubic | 59.5 | 16000 | 100 | 16000 | 100 | 17000 | 47.7 | 27000 |

| SVM: Fine Gaussian | 79.9 | 13000 | 100 | 12000 | 99.2 | 16000 | 75.9 | 18000 |

| SVM: Medium Gaussian | 81.4 | 9400 | 97.9 | 16000 | 85.0 | 18000 | 69.4 | 20000 |

| SVM: Coarse Gaussian | 52.1 | 8200 | 88.9 | 14000 | 67.8 | 14000 | 65.9 | 16000 |

| Ensemble Boosted Trees | 93.0 | 9200 | 52.8 | 27000 | 99.9 | 10000 | 82.1 | 11000 |

| Ensemble Bagged Trees | 100 | 8200 | 100 | 11000 | 100 | 11000 | 100 | 12000 |

| Ensemble Subspace Discriminant | 56.3 | 6100 | 69.9 | 5900 | 59.3 | 6200 | 70.6 | 9500 |

| Ensemble Subspace KNN | 100 | 4400 | 100 | 4500 | 100 | 5000 | 100 | 6700 |

| Ensemble RUS Boosted Trees | 90.5 | 9800 | 83.6 | 61000 | 95.3 | 13000 | 80.4 | 11000 |

| Linear Discriminant | 56.3 | 13000 | 77.4 | 17000 | 62.5 | 17000 | 70.6 | 17000 |

| Naïve Bayes | 48.8 | 28000 | 60.9 | 60000 | 86.0 | 4800 | 73.8 | 18000 |

Table 5: Classifiers performance reached in validation. Classification between FSK, BPSK, QPSK, 8PSK and 16PSK modulations using statistical features derived from the wavelet transform of the time series recorded during 500 microseconds for Richian multipath with AWGN (SNR =30dB) channel model. Data set 5.

| Classifier | With PCA

(1 feature of 10) |

No PCA

(10 features of 10) |

No PCA

(5 features of 10) |

No PCA

Mean (1 feature of 10) |

||||

| Average Accuracy, % | Prediction speed,

Objects/s |

Average Accuracy, % | Prediction speed,

Objects/s |

Average Accuracy, % | Prediction speed,

Objects/s |

Average Accuracy, % | Prediction speed, Objects/s | |

| Decision Trees: Fine | 100 | 21000 | 100 | 27000 | 100 | 150000 | 93.0 | 160000 |

| Decision Trees: Medium | 49.5 | 98000 | 60.9 | 140000 | 55.6 | 150000 | 40.8 | 140000 |

| Decision Trees: Coarse | 41.6 | 11000 | 45.2 | 110000 | 41.5 | 120000 | 31.1 | 150000 |

| KNN: Fine | 100 | 22000 | 100 | 40000 | 100 | 79000 | 100 | 72000 |

| KNN: Medium | 95.7 | 23000 | 96 | 25000 | 95.7 | 45000 | 95.4 | 71000 |

| KNN: Coarse | 34.5 | 18000 | 36 | 16000 | 42.5 | 30000 | 37.7 | 36000 |

| KNN: Cosine | 37.7 | 17000 | 95.7 | 36000 | 95.7 | 30000 | 21.3 | 34000 |

| KNN: Cubic | 95.7 | 24000 | 95.6 | 2400 | 95.3 | 17000 | 95.4 | 54000 |

| KNN: Weighted | 100 | 29000 | 100 | 35000 | 100 | 38000 | 100 | 81000 |

| SVM: linear | 36.1 | 21000 | 36.6 | 26000 | 36.1 | 26000 | 21.8 | 40000 |

| SVM: Quadratic | 35.7 | 17000 | 66.6 | 21000 | 45.2 | 26000 | 23.5 | 29000 |

| SVM: Cubic | 36.5 | 19000 | 100 | 21000 | 61.4 | 30000 | 23.2 | 33000 |

| SVM: Fine Gaussian | 37.1 | 7800 | 91.8 | 11000 | 81.6 | 14000 | 38.0 | 11000 |

| SVM: Medium Gaussian | 36.2 | 8700 | 46.0 | 10000 | 44.2 | 11000 | 29.6 | 12000 |

| SVM: Coarse Gaussian | 35.0 | 8500 | 35.1 | 9700 | 34.9 | 11000 | 24.3 | 9700 |

| Ensemble Boosted Trees | 56.8 | 9400 | 86.4 | 12000 | 85.4 | 9700 | 49.9 | 12000 |

| Ensemble Bagged Trees | 100 | 9300 | 100 | 14000 | 100 | 8700 | 100 | 10000 |

| Ensemble Subspace Discriminant | 35.8 | 7800 | 39 | 8100 | 35.0 | 6400 | 22.5 | 8400 |

| Ensemble Subspace KNN | 100 | 5700 | 100 | 5300 | 100 | 4100 | 100 | 6100 |

| Ensemble RUS Boosted Trees | 61.7 | 11000 | 80.5 | 14000 | 86.0 | 14000 | 47.5 | 13000 |

| Linear Discriminant | 35.8 | 8600 | 37.9 | 18000 | 34.7 | 110000 | 22.5 | 100000 |

| Naïve Bayes | 39.9 | 9700 | 37.6 | 74000 | 37.9 | 110000 | 24.9 | 140000 |

5. ML classifiers training and validation results

Selected twenty-three classifiers have been trained and validated multiple times using every data set described above to investigate the effect of the extracted features and observation time on classification accuracy and speed. Among the trained classifiers we have studied decision trees, KNN with the various kernels, support vector machines (SVM), ensembles with bagging and boosting sampling, and discriminant methods. Also, principal component analysis PCA has been applied to keep enough components to explain 95 % of data variance for the dimension reduction. To protect against overfitting five-fold cross-validation method was used.

5.1. Classification using instantaneous values

Modulation type classification has been performed based on the input values of the instantaneous values of the in-phase and quadrature components of the time domain digital signal. Primary Classifiers have been trained three times using data set 1: 1. using PCA, 2. Using all three features: in-phase, quadrature components and SNR 3. Using two features including in-phase, quadrature components. Table 2 summarizes the validation results for the AWGN channel with SNR ranging from 1 to 30 dB. The effect of input features available in the data set 1 on classification accuracy, and speed has been studied. The highest classification accuracy has been achieved using all three available features: in-phase, quadrature components, and SNR. However, removing SNR from classification resulted in a reduction in the average classification accuracy by only 3 %. The highest average classification accuracy of 86.9 % was observed for the Fine Gaussian SVM, which on the other hand has been observed to be the slowest. Ensemble boosted trees with 30 decision trees learners trained using AdaBoost sampling and 20 splits and fine decision trees have shown optimal performance in terms of both classification accuracy and speed with an average classification accuracy of 86.3 % and 86.0 %, classification speed of 1200000 objects per second, which is faster than required 2000 objects per second.

Data set 3 containing also instantaneous values of the time domain signal and SNR consisting of five modulations has been used to train classifiers to classify all five modulation types. However, the average classification accuracy has not met the requirement of 85 %. The highest classification accuracy of 78.1 % has been reached by customized decision trees with the number of splits set to 2689, Gini’s diversity index has been used as a split criterion.

Among the other tested classifiers, there were ensemble bagged trees, ensemble boosted trees, RUS boosted trees which have demonstrated even worse classification accuracy than decision trees. To capture more of the fine differences between the received signal modulated into different linear modulations it is suggested to use the spectral features derived from the signal observed during the selected observation time.

5.2. Classification using time-series statistics.

Training and validation for both propagation channels AWGN (SNR from 1 dB to 30 dB) and AWGN (SNR = 30 dB) with Rician fading have been performed using data sets 4-7. Primary, the training of the studied classifiers has been performed using time series recorded during observation time corresponding to 500 microseconds using data sets 4 and 5 for AWGN and Rician channel with AWGN, respectively. Classifiers have been trained four times: 1. using PCA, 2. Using all ten spectral-based statistical features 3. Using five features including mean, standard deviation, root-mean-square level, zero crossing rate, 75th percentile of a data set, and 4. Using only one input feature: the mean value of the wavelet coefficients. Tables 4-5 summarize the validation results for the AWGN channel with SNR ranging from 1 to 30 dB and Rician multipath with AWGN (SNR = 30 dB) channel. The best performing classifiers that have reached 100% classification accuracy have been retrained on the features derived from the time series observed during 100 microseconds. Tables 6 and 7 present the validation results for AWGN and multipath Rician channel trained and validated four times as for the time series recorded during 500 microseconds. Tables 6 and 7 show that four out of the five selected classifiers including fine decision trees, fine KNN, ensemble bagged trees, and ensemble subspace KNN that demonstrated classification accuracy of 100 % on the time series recorded during 500 microseconds have demonstrated classification accuracy close to 100 % when trained using PCA, using all ten features and using five features for both AWGN and multipath channel also when have been trained on time series recorded during 100 microseconds. However, SVM has shown worse performance when trained with a reduced number of features.

Applying PCA has resulted in a drastic decrease in the classification accuracy for ensemble subspace KNN for both AWGN and multipath Rician channels. For most classifiers, like fine decision trees, ensemble bagged trees applying PCA has resulted in the decreased classification speed. Fine KNN has shown 100% classification accuracy for classification using only one input to the classifier: mean value and the highest classification speed of 56000 and 91000 objects/s for AWGN and multipath fading channel, respectively. However, KNN is still slow even using only one feature than fine decision trees using five features classifying 110000 and 120000 for AWGN and multipath channel.

6. Classification feature analysis using fractional factorial design

The fractional factorial design has been applied to determine the most significant classification features referred to as the design.

Table 6: Classifiers performance reached in validation. Classification between FSK, BPSK, QPSK, 8PSK and 16PSK modulations using statistical features derived from the wavelet transform of the time series recorded during 100 microseconds for AWGN (SNR ranging from 1 to 30 dB) channel. Data set 6

| Classifier | With PCA

(1 feature of 10) |

No PCA

(10 features of 10) |

No PCA

(5 features of 10) |

No PCA

Mean (1 feature of 10) |

||||

| Average Accuracy, % | Prediction speed,

Objects/s |

Average Accuracy, % | Prediction speed,

Objects/s |

Average Accuracy, % | Prediction speed,

Objects/s |

Average Accuracy, % | Prediction speed,

Objects/s |

|

| Decision Trees: Fine | 100 | 9500 | 100 | 18000 | 100 | 110000 | 100 | 120000 |

| KNN: Fine | 100 | 21000 | 100 | 37000 | 98.6 | 19000 | 100 | 56000 |

| KNN: Weighted | 100 | 22000 | 100 | 34000 | 100 | 47000 | 100 | 58000 |

| SVM: Cubic | 95.7 | 23000 | 100 | 19000 | 100 | 24000 | 38.7 | 31000 |

| Ensemble Bagged Trees | 100 | 7600 | 100 | 8700 | 100 | 6800 | 100 | 11000 |

| Ensemble Subspace KNN | 36.4 | 5500 | 100 | 4700 | 100 | 4800 | 100 | 5400 |

Table 7: Classifiers performance reached in validation. Classification between FSK, BPSK, QPSK, 8PSK and 16PSK modulations using statistical features derived from the wavelet transform of the time series recorded during 100 microseconds for Rician multipath with AWGN (SNR = 30dB) channel model. Data set 7

| Classifier | With PCA

(1 feature of 10) |

No PCA

(10 features of 10) |

No PCA

(5 features of 10) |

No PCA

Mean (1 feature of 10) |

||||

| Average Accuracy, % | Prediction speed,

Objects/s |

Average Accuracy, % | Prediction speed,

Objects/s |

Average Accuracy, % | Prediction speed,

Objects/s |

Average Accuracy, % | Prediction speed,

Objects/s |

|

| Decision Trees: Fine | 100 | 15000 | 100 | 23000 | 100 | 120000 | 94.2 | 160000 |

| KNN: Fine | 100 | 27000 | 100 | 24000 | 100 | 55000 | 100 | 91000 |

| KNN: Weighted | 100 | 25000 | 100 | 27000 | 100 | 48000 | 100 | 58000 |

| SVM: Cubic | 100 | 7900 | 100 | 16000 | 69.9 | 28000 | 69.9 | 28000 |

| Ensemble Bagged Trees | 100 | 5700 | 100 | 9900 | 100 | 11000 | 100 | 11000 |

| Ensemble Subspace KNN | 36.4 | 6900 | 100 | 5200 | 100 | 5000 | 100 | 6500 |

Table 8: Experimental factors and levels

| N | Factor | Unit | Sym-bol | Coded level | |

| -1 | +1 | ||||

| 1 | Mean | – | A | On | off |

| 2 | Standard Deviation | – | B | On | off |

| 3 | Kurtosis | – | C | On | off |

| 4 | Skewness | – | D | On | off |

| 5 | Median absolute deviation | – | E | On | off |

| 6 | Root-mean-square level | – | F | On | off |

| 7 | Zero crossing rate | – | G | On | off |

| 8 | Interquartile range | – | H | On | off |

| 9 | 75th percentile of data set | – | I | On | off |

| 10 | SNR | dB | J | On | off |

| 11 | Observation time | μs | K | 100 | 500 |

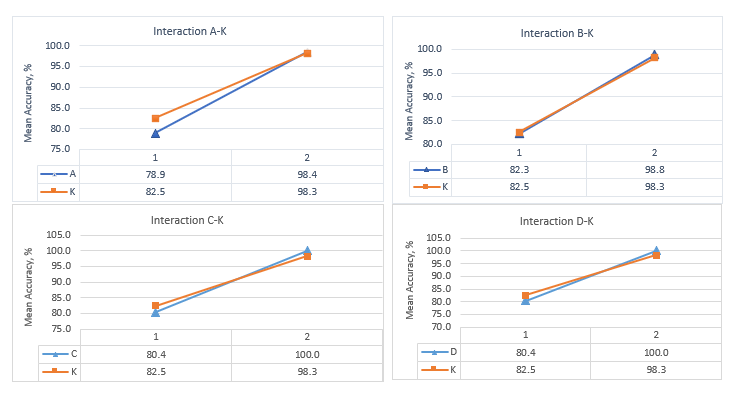

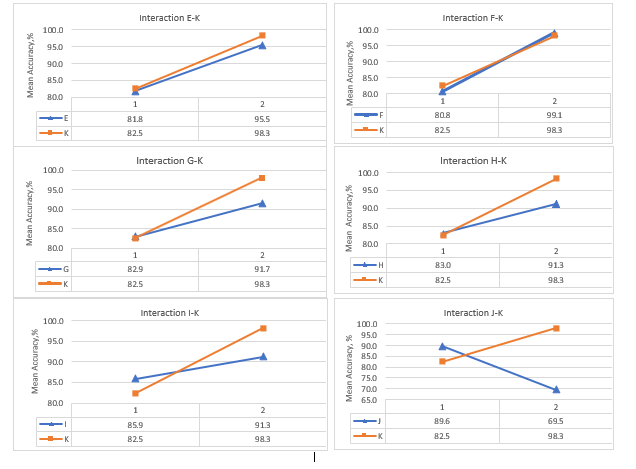

factors that have the highest main effect on the response parameters: classification accuracy and speed We have been looking at both the main effects of the independent variables and interactions between the input parameters (A-J) and observation time (K) for the best performing classifier in terms of accuracy and speed. We have 10 design factors corresponding to the features referred as (A-J) and observation time referred to as (K) with 2 levels for each design factor corresponding to: high (+1) the feature is used for classification or low (-1) the feature is not used for classification. Observation time has been also varied at two levels (+1) high corresponding to 500 microseconds and (-1) low corresponding to 100 microseconds. We have considered one noise factor corresponding to the propagation channel model with two levels corresponding to the AWGN (-1) channel with SNR ranging from 1dB to 30 dB and Rician multipath propagation and AWGN with SNR=30 dB (+1). The full factorial design will result in 210 number of experiments, to reduce the number of experiments this study has been limited to fractional factorial design with 13 experiment runs performed, where the first ten runs with only one feature used for classification independently from the others for 100 microseconds observation time, since we are mostly interested to study the performance for the shortest observation time. The eleventh run has been selected to investigate the effect of observation time; the twelfth and thirteenth runs have been included to study the effect of observation time independently from the classification features with all nine spectral-based statistical features used as classification input.

The main effect is defined as the overall effect of an independent variable in the complex design. The definition of an effect is the difference in the means between the high (+1) and the low level (-1) of a factor. From this notation, A is the difference between the mean values of the observations at the high level of A minus the average of the observations at the low level of A. Interaction is defined here as the effect of one independent variable depending on the other independent variable, i.e it describes the combined effect of the independent variables considered simultaneously [15]. Tables 9-13 summarize the experimental design and results: main effect of every factor on classification accuracy and speed for five best performing classifiers: fine decision trees, fine KNN, weighted KNN, ensemble bagged trees with 59886 splits, and 30 learners and ensemble subspace KNN with 9 subspace dimensions and 30 learners.

The overall highest main effects on classification accuracy and speed have been observed for the fine decision trees classifier. In tables 9-13, we have obtained a relatively high main effect for using/not using SNR for classification. In tables 9-13, summarizing the performance of the classifier, it is possible to see that removing the SNR value as an input to the classifier does not affect the response parameters significantly, on the other hand using SNR value only for classification as in the experiment run N10 (what in reality does not make any sense) result in the very low classification accuracy. Since the main effect is a difference between the mean values of the high and low, we observe here the high main effect of SNR as a classification input parameter. For example, the main effect of using SNR (J) as classification input on classification accuracy for fine decision trees is –20.0, while for Kurtosis (C) it is 19.6. Classification accuracy if we use only (C) for classification experiment run N3 is 100% for both Rician and AWGN channels and if we use only (J) for classification is 8.6% for both Rician and AWGN channels. This makes the highest main effect of SNR input a questionable result for practical application. Also, the value of the main effect should be interpreted only in combination with the value of the response parameter: classification accuracy and speed.

The highest classification speed of 170 000 objects per second for 100% classification accuracy has been demonstrated by fine decision trees using only one classification input the skewness of the wavelet coefficients derived from signal observed during 100 microseconds for AWGN channel model. For Rician and AWGN, channel classification speed has been slower 130 000 objects/s. Both skewness and kurtosis has shown the highest main effect on classification accuracy for fine trees. Using mean value as the only input parameter to fine trees classifier, however, has shown less robust results in terms of the classification accuracy: 94.5% has been demonstrated for Rician and AWGN channel, while for AWGN channel 100%. The mean value shows the second-highest main effect on the classification accuracy 19.5 for the fine decision trees and the highest main effect of 10.9, 11.1, 11.5, and 10.9 for the other four classifiers including fine KNN, weighted KNN, ensemble bagged trees and ensemble subspace KNN, respectively. It also shows the highest main effect on the classification speed for all five selected classifiers.